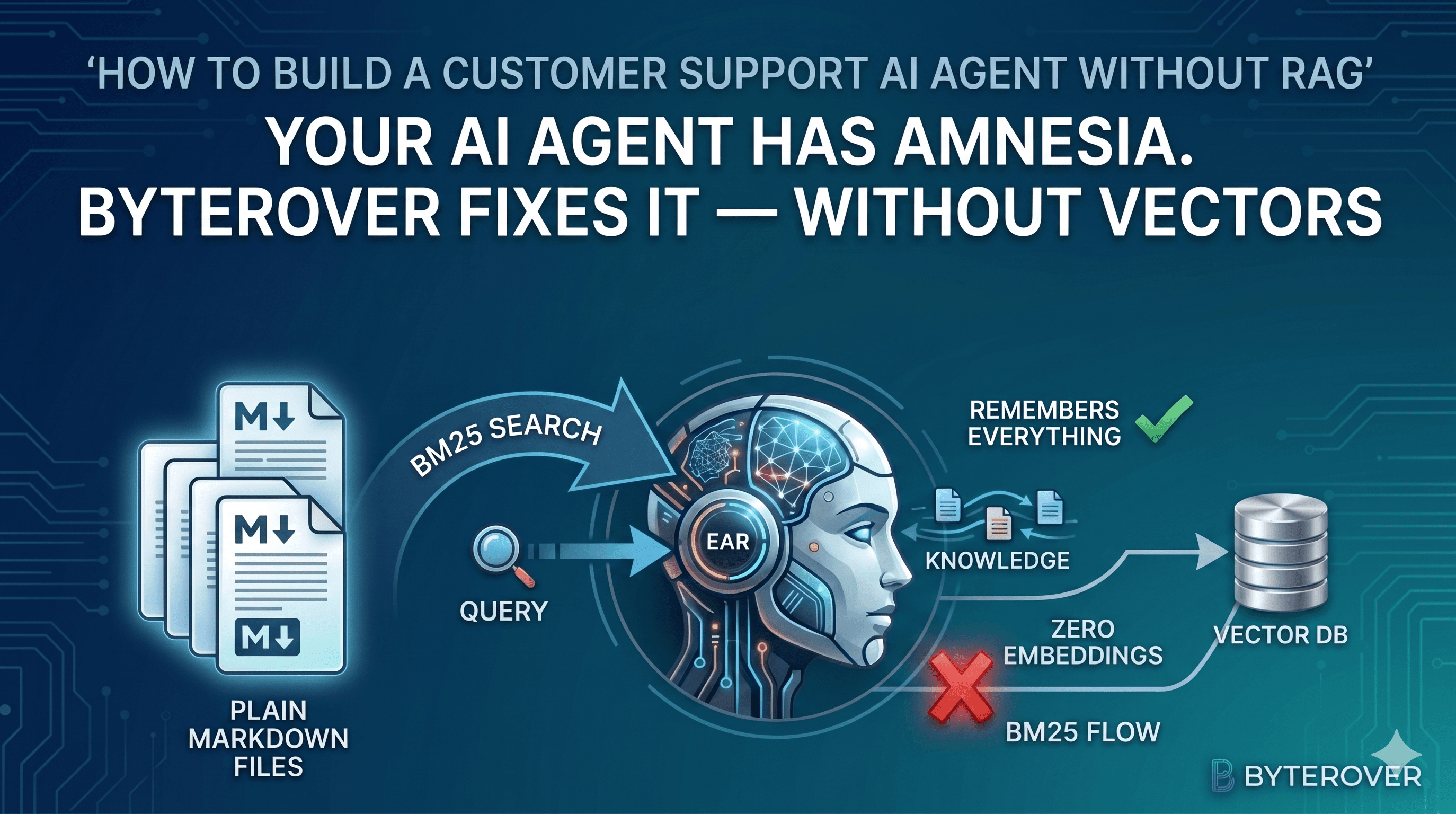

No RAG. No Vectors. No Amnesia. Here's How to Built a Smarter Support Agent with Persistent Memory

Your AI Agent Has Amnesia. ByteRover Fixes It — Without Vectors

Let's see how to built a customer support agent that remembers everything — using plain markdown files, BM25 search, zero embeddings, and pure vectorless approach

01 — The Problem

RAG promised AI memory. It lied.

We've seen building RAG systems the same way for years. Chunk your docs. Embed them into vectors. Store in a vector database. At query time, compute similarity and retrieve the top-k chunks. Feed to LLM. Hope for the best.

It works. Until it doesn't. And when it stops working, you have no idea why.

I've built RAG pipelines with ChromaDB, Pinecone, and Weaviate. I've implemented HyDE, reranking, query rewriting, MMR, and hybrid search. Every optimisation added complexity and every deployment added new failure modes I couldn't debug. The core problem was never the retrieval algorithm — it was that the knowledge store was a black box.

You cannot

catan embedding vector and understand what your agent knows. You cannot open Pinecone in VS Code and read what it stored. When retrieval fails silently, you are left guessing.

Three real pain points that drove me to look for something different:

① Similarity Search Degrades Silently As your knowledge base grows, cosine similarity loses precision. A query about "refund policy" starts returning chunks about "return window" and "shipping" because everything is semantically close. You don't get an error — you get subtly wrong answers.

② Your Agent Forgets Between Sessions Traditional RAG has no persistent agent memory. Every session starts cold. The agent doesn't remember what it learned last time, what conventions it figured out, or what the customer told it yesterday.

③ The Knowledge Store is a Black Box Vector databases store opaque numerical representations. When retrieval fails you cannot inspect what was stored, how it was chunked, or why a query matched the wrong document. Debugging means adding more logs, not reading the data.

Then I came across the PageIndex and ByteRover approach — a thin idea that turned my thinking upside down. Instead of embedding documents, what if an LLM simply read them, reasoned about them, and stored the knowledge in a human-readable hierarchy? No similarity search. No vectors. Just files.

ByteRover and other tools try to productionises exactly this idea. And after experimenting it for a months, I'm not going back.

02 — The Idea

No embeddings. No similarity search. Just files.

The insight is embarrassingly simple. When you want to find information in a technical manual, you don't compute cosine similarity between your query and every page. You open the table of contents, navigate to the right chapter, then the right section.

ByteRover makes your AI agent do the same thing — but for any knowledge base you give it.

Traditional RAG vs ByteRover — Side by Side

| Step | Traditional RAG | ByteRover |

|---|---|---|

| Ingest | Chunk into 512-token fragments | LLM reads and reasons about full doc |

| Store | Compute embeddings → Vector DB | Organise into markdown hierarchy |

| Retrieve | Cosine similarity (black box) | BM25 + Cache + LLM reasoning |

| Debug | Impossible — opaque vectors | Open the .md file and read it |

| Version control | Not possible | git diff your knowledge |

| Infrastructure | Vector DB + embedding service | Zero — just local files |

The critical difference: everything ByteRover stores is human-readable. You can open .brv/context-tree/ in your file explorer and read exactly what your agent knows. You can git diff what changed after a curation. You can delete a file if the knowledge is wrong. It's just files.

Key Insight: ByteRover is not a retrieval optimisation on top of vector search. It is a fundamentally different approach: LLM-curated, file-based knowledge that you can read, edit, version-control, and debug without any special tooling.

03 — How It Works

The architecture, explained simply

ByteRover has two main operations: Curation (writing to memory) and Query (reading from memory). Both run entirely on your local machine. No cloud required.

The Context Tree — Local File Structure

All knowledge lives in .brv/context-tree/ as a three-level hierarchy. Every node is a folder with a context.md describing its purpose. Every piece of knowledge is a plain markdown file.

.brv/context-tree/

├── orders/ # Domain

│ ├── context.md # auto-generated overview

│ ├── _index.md # auto-generated summary

│ ├── cancellation/ # Topic

│ │ ├── context.md

│ │ └── order_cancellation_policy.md

│ └── wrong-items/

│ └── wrong_item_resolution.md

├── payments/

│ ├── context.md

│ ├── payment-methods/

│ │ └── supported_payment_methods.md

│ └── refunds/

│ ├── refund_timelines.md

│ └── cod_refund_policy.md

├── shipping/

│ ├── context.md

│ └── delivery-timelines/

│ └── city_delivery_windows.md

└── returns/

├── context.md

└── return-window/

└── return_eligibility.md

This is the entire knowledge store. No database. No server. A folder you can open in Finder or Explorer.

Curation — Writing to Memory

When you call brv curate -d docs/, ByteRover's LLM runs in a sandbox with a ToolsSDK. It reads your documents, reasons about the content, and writes structured knowledge using five atomic operations:

| Operation | What It Does |

|---|---|

ADD |

Creates a new knowledge file. Auto-generates context.md at each hierarchy level |

UPDATE |

Modifies an existing entry. Resets recency, boosts importance score |

UPSERT |

Creates if new, updates if exists — no pre-check needed |

MERGE |

Combines two entries intelligently, deletes source |

DELETE |

Removes a file or entire subtree |

The LLM gets feedback on every operation. If a MERGE fails, it sees the error and retries with a corrected approach. This stateful feedback loop means the system doesn't silently drop errors — it surfaces them as actionable information back to the agent.

After curation, ByteRover also extracts structured Facts from your content — project conventions, architecture decisions, rules — and appends them directly to each markdown file:

## Facts

- **refund_upi**: UPI refunds processed within 2-3 business days [convention]

- **refund_card**: Credit/Debit card refunds take 5-7 business days [convention]

- **cod_refund**: COD refunds issued as ShopEase Wallet credits only [project]

Query — The 5-Tier Retrieval Pipeline

This is where ByteRover really shines. Every query goes through a tiered strategy that starts with the cheapest possible path and escalates only when needed. Most queries resolve in under 200ms — without touching an LLM at all.

| Tier | Name | Speed | How It Works |

|---|---|---|---|

| 0 | Exact Cache | ~0ms | MD5 fingerprint match — same query, cached result (60s TTL) |

| 1 | Fuzzy Cache | ~50ms | ≥60% token similarity to a recent query |

| 2 | BM25 Direct | ~200ms | Full-text search — if top result scores high, return immediately. No LLM. |

| 3 | LLM Pre-fetch | <5s | BM25 results injected as context for a single LLM synthesis call |

| 4 | Agentic Loop | 8–15s | Full multi-step reasoning: reads files, follows relations, iterates |

Tiers 0–2 bypass the LLM entirely. The compound scoring formula that ranks results:

score = (0.6 × BM25) + (0.25 × importance) + (0.15 × recency) × tierBoost

Where importance accumulates every time a file is queried (+3) or updated (+5), and recency decays exponentially over time. Files that are frequently accessed naturally rank higher — the knowledge base gets smarter the more you use it.

Out-of-Domain Detection: When a query has no good match in the knowledge tree, ByteRover tells you explicitly: "this topic isn't covered — curate relevant knowledge first." No hallucinated answers. No confident wrong responses.

Session Learning — Memory That Grows

After every session, ByteRover automatically extracts durable knowledge from the conversation — patterns you used, decisions you made, preferences you expressed — and persists them as agent memories across five categories:

| Category | What It Captures |

|---|---|

| Patterns | Reusable code or workflow patterns |

| Preferences | User style, naming, structure decisions |

| Entities | Key files, modules, APIs, dependencies |

| Decisions | Architectural choices (immutable log) |

| Skills | Tool invocation recipes that worked |

Your agent gets smarter over time without any extra work.

Two layers of memory working together:

| Layer | Tool | What it remembers |

|---|---|---|

| Knowledge memory | ByteRover | ShopEase policies, timelines, rules |

| Session memory | conversationHistory array |

What this customer said today |

04 — Building the Agent

A customer support agent with ByteRover memory

Let's build a real thing. A customer support agent for ShopEase — a fictional Indian e-commerce platform. The agent will answer questions about orders, payments, shipping, and refunds using ByteRover as its memory layer and Groq (Llama 3.3 70B) for responses.

No vector database. No embeddings. The entire retrieval happens through brv query — a subprocess call that returns the right markdown context in under a second.

Agent Flow:

Customer question

│

▼

brv query "..." ← subprocess to ByteRover daemon

│

▼

ByteRover 5-tier retrieval

Tier 0/1 → cache hit ~0ms

Tier 2 → BM25 match ~200ms (no LLM)

Tier 3/4 → LLM reason <15s

│

▼

Retrieved context (markdown)

│

▼

Groq / Llama 3.3 70B

system prompt + context + conversation history

│

▼

Support response → Customer

Project Structure

support-agent/

├── docs/

│ ├── faq.md # ShopEase FAQ

│ ├── shipping-policy.md # Shipping rules

│ └── refund-policy.md # Refund rules

├── src/

│ ├── curate.js # Feeds docs → ByteRover memory

│ ├── query.js # Queries ByteRover memory

│ └── agent.js # Main chat loop

├── .env.example

└── package.json

Step 1 — Install ByteRover

# Install ByteRover CLI (starts a local daemon)

curl -fsSL https://www.byterover.dev/install.sh | sh

# Verify it's running

brv status

# Install project dependencies

npm install

Step 2 — The Sample Docs

Create these three files in docs/. ByteRover will reason about them during curation and organise them into the context tree.

docs/faq.md — covers orders, payments, shipping, returns, account management.

docs/shipping-policy.md — dispatch timelines, shipping partners, failed deliveries, COD rules.

docs/refund-policy.md — refund eligibility, timelines by payment method, COD refunds, escalations.

You can find the full code implementation and content of all three docs in the GitHub repo.

Step 3 — The Query Helper (src/query.js)

This is the core of the integration. brv query runs as a subprocess against the local ByteRover daemon. It returns plain text — the retrieved context from your markdown knowledge tree. Twenty lines. No SDK. No API key for ByteRover.

import { exec } from "child_process";

import { promisify } from "util";

const execAsync = promisify(exec);

/**

* Queries ByteRover's local context tree.

*

* ByteRover's 5-tier strategy handles routing automatically:

* Tier 0/1 → cache hit ~0ms

* Tier 2 → BM25 direct ~200ms (no LLM needed)

* Tier 3/4 → LLM reasoning <15s

*/

export async function queryMemory(question) {

try {

const sanitized = question.replace(/"/g, '\\"');

const { stdout, stderr } = await execAsync(

`brv query "${sanitized}"`,

{ timeout: 30000 } // 30s covers Tier 4 agentic loop

);

if (stderr && !stdout) {

console.error("[ByteRover] Error:", stderr);

return null;

}

return stdout.trim();

} catch (err) {

console.error("[ByteRover] Query failed:", err.message);

return null;

}

}

Step 4 — Curate Your Docs (src/curate.js)

Run this once before starting the agent. ByteRover reads all files in docs/, reasons about the content, and organises everything into the context tree. After this runs, you can literally cat .brv/context-tree/payments/refunds/refund_timelines.md and read what your agent knows.

import { exec } from "child_process";

import { promisify } from "util";

import path from "path";

import { fileURLToPath } from "url";

const execAsync = promisify(exec);

const __dirname = path.dirname(fileURLToPath(import.meta.url));

const DOCS_DIR = path.resolve(__dirname, "../docs");

/**

* Curates all support docs into ByteRover's local context tree.

*

* ByteRover's curation engine:

* 1. Reads and reasons about each document

* 2. Organises into Domain → Topic → Subtopic hierarchy

* 3. Stores as plain markdown in .brv/context-tree/

* 4. Generates _index.md summaries at every level

* 5. Extracts structured facts (policies, timelines, rules)

*

* Run once. Re-run whenever docs are updated.

*/

async function curateDocs() {

console.log("🔄 Curating support docs into ByteRover memory...");

console.log(` Source: ${DOCS_DIR}\n`);

const { stdout } = await execAsync(

// -d flag: curate entire directory at once

`brv curate "ShopEase support docs: FAQs, shipping, refunds" -d ${DOCS_DIR}`,

{ timeout: 120000 } // 2 min — multiple LLM calls during curation

);

console.log(stdout);

console.log("✅ Done. Run `npm run chat` to start the agent.");

console.log(" Tip: run `brv vc status` to see what was stored.\n");

}

curateDocs().catch(console.error);

Step 5 — The Main Agent (src/agent.js)

import Groq from "groq-sdk";

import readline from "readline";

import { queryMemory } from "./query.js";

import "dotenv/config";

const groq = new Groq({ apiKey: process.env.GROQ_API_KEY });

const MODEL = "llama-3.3-70b-versatile"; // Open-source via Groq

// Session memory — what this customer said today

const conversationHistory = [];

const SYSTEM_PROMPT = `You are a helpful customer support agent for ShopEase — an Indian e-commerce platform.

Use ONLY the retrieved context to answer. Never invent policies or timelines.

If the context doesn't cover the question, say: "Please contact support at 1800-123-4567."

Keep responses under 150 words.`;

/**

* For each user message:

* 1. Query ByteRover for relevant context (no similarity search)

* 2. Inject context into the message

* 3. Call Groq with full conversation history

* 4. Return and store the response

*/

async function chat(userMessage) {

// Step 1 — Retrieve relevant context from ByteRover

process.stdout.write("\n🔍 Querying ByteRover memory...");

const context = await queryMemory(userMessage);

process.stdout.write(" done\n\n");

if (!context) {

return "Knowledge base unavailable. Please call 1800-123-4567.";

}

// Step 2 — Inject context into the message

const enrichedMessage = `RETRIEVED CONTEXT FROM BYTEEROVER:

---

${context}

---

CUSTOMER QUESTION: ${userMessage}`;

conversationHistory.push({ role: "user", content: enrichedMessage });

// Step 3 — Call Groq with system prompt + full history

const response = await groq.chat.completions.create({

model: MODEL,

messages: [

{ role: "system", content: SYSTEM_PROMPT },

...conversationHistory,

],

temperature: 0.3, // Low — factual support, not creative

max_tokens: 512,

});

const reply = response.choices[0].message.content;

// Store in history without injected context (saves tokens on next turn)

conversationHistory.push({ role: "assistant", content: reply });

return reply;

}

async function main() {

const rl = readline.createInterface({

input: process.stdin,

output: process.stdout,

});

console.log("ShopEase Support Agent — ByteRover + Groq Llama 3.3 70B");

console.log('Type your question. Type "exit" to quit.\n');

const ask = () => {

rl.question("You: ", async (input) => {

const msg = input.trim();

if (!msg) return ask();

if (msg === "exit") { rl.close(); return; }

const reply = await chat(msg);

console.log(`Agent: ${reply}\n`);

ask();

});

};

ask();

}

main();

Running It

# 1. Add your Groq API key

cp .env.example .env

# Edit .env → GROQ_API_KEY=your_key_here

# 2. Curate docs into ByteRover memory (run once)

npm run curate

# 3. Inspect what ByteRover stored

brv vc status

brv query "refund policy UPI"

# 4. Start the agent

npm run chat

The wow moment: After running

npm run curate, open.brv/context-tree/in your file explorer. You'll see your support docs organised into readable markdown files by domain and topic — exactly what your agent knows, readable by a human, editable with any text editor. No black box. No guessing.

05 — Honest Tradeoffs

When to use it. When not to.

ByteRover is not a drop-in replacement for all RAG use cases.

✅ Use ByteRover when:

Your agent needs persistent memory across sessions

You want to onboard a codebase your AI coding agent should understand

You're working with private or regulated data — local-first, no cloud dependency required

You want to debug why retrieval failed — just open the markdown file

Your knowledge base has clear structure (policies, docs, conventions, code patterns)

❌ Skip it when:

You need pure semantic similarity search over millions of unstructured documents

One-shot queries with no repeated patterns — curation overhead not worth it

Real-time data that changes faster than you can re-curate

Multi-session synthesis is your primary use case — ByteRover is still improving here

07 — Wrapping Up

The model is interchangeable. The memory architecture isn't.

I've been building RAG systems for two years. The assumption I carried throughout — that embeddings and vector similarity search are the only serious approach — turned out to be wrong.

ByteRover showed me a different model: curate knowledge once, query it intelligently forever. Files you can read. Structure you can understand. Memory that persists across sessions. A retrieval system that escalates through tiers rather than blindly computing similarity over everything.

The support agent we built has three files of actual code. The memory system is handled by ByteRover. The response generation is handled by Groq. The only thing you write is the glue — and the glue is twenty lines.

The bottom line: When your agent makes a mistake, you shouldn't have to guess why. You should be able to open a markdown file and read what it knew. An Agentic memory tool like Byterover makes that possible.

Share the following prompt with your LLM to code the demo-code we talked in these blog.

Prompt: Reconstruct customer-support-agent-101

Build a Node.js (ES modules) project named support-agent (folder name can be customer-support-agent-101) that implements an interactive CLI customer-support agent for a fictional Indian e-commerce brand ShopEase.

Stack

Runtime: Node, "type": "module" in package.json

Dependencies: groq-sdk (^0.9.x), dotenv (^16.x)

Scripts:

"curate": "node src/curate.js"

"chat": "node src/agent.js"

External tool (documented in README comments / console messages): ByteRover CLI (brv). User installs via ByteRover’s install script; agent assumes brv is on PATH and a local daemon/context tree works.

Environment

Add .env.example with: GROQ_API_KEY=your_groq_api_key_here

Load env with import "dotenv/config" where needed

.gitignore: node_modules/, .env, .DS_Store, .byterover-*.xml, .brv/_queue_status.json, .brv/config.json, .claude/settings.local.json

Knowledge base (docs/)

Create three markdown files with realistic ShopEase policy and FAQ content (Indian context: ₹, pincode, partners like BlueDart/Delhivery, etc.):

docs/shipping-policy.md — dispatch timelines, shipping partners, failed delivery attempts (3 attempts → return → refund window), damaged-in-transit procedure, India-only shipping, no PO boxes, heavy items (20kg+) timelines, COD limits (e.g. up to ₹10,000), COD + EMI note

docs/refund-policy.md — eligibility, refund timelines table (UPI, net banking, cards, wallet, EMI, COD→wallet only), partial refunds, tracking, disputes (refunds@shopease.in, 1800-123-4567), non-refundable categories

docs/faq.md — sections: Orders, Payments, Shipping & Delivery, Returns & Refunds, Account & Security (place/cancel orders, wrong item, payment failure, coupons, delivery timelines, free shipping threshold, tracking, return window, refund times, password reset, addresses, account deletion)

src/query.js

Export queryMemory(question) that runs brv query "<sanitized>" via child_process.exec + promisify, timeout 30s

Escape double quotes in the user question for the shell

On failure or empty useful stdout, return null; log stderr/helpful hints

If stdout indicates ByteRover cannot connect to API / LLM, hint running npm run curate first

src/curate.js

Resolve project root from import.meta.url; docs/ path = path.join(PROJECT_ROOT, "docs")

Poll .brv/_queue_status.json every ~3s (max ~180s): treat success as !processing && processed > 0; failure if failed > 0

Flow:

brv vc init (cwd = project root, timeout ~60s)

Require GROQ_API_KEY; else exit with message to copy .env.example → .env

brv providers connect groq -k <key> -m llama-3.1-8b-instant (timeout ~30s)

brv curate "<description>" -d <DOCS_DIR> --detach where description is like: ShopEase customer support knowledge base. FAQs, shipping policy, and refund policy. Organize by topic: orders, payments, shipping, returns, account.

Wait for curation via queue status file; print success/failure guidance (brv vc status, install URL)

Use clear console banners and user-facing error messages

src/agent.js

Groq client from env GROQ_API_KEY

Model: llama-3.3-70b-versatile

conversationHistory array in memory for the session

SYSTEM_PROMPT: ShopEase support agent; must use only retrieved ByteRover context; polite/concise/warm; if missing info use fallback line with 1800-123-4567 and refunds@shopease.in; don’t invent policies; ~150 word soft limit; acknowledge order IDs even if not lookup-capable

chat(userMessage):

Print “Querying ByteRover memory…” then await queryMemory(userMessage)

If no context, return friendly “knowledge base unavailable” message with support phone

Build enriched user message: header RETRIEVED CONTEXT FROM BYTEEROVER KNOWLEDGE BASE: + context + CUSTOMER QUESTION:

Push that string as user message to history

Call Groq chat.completions.create with system + full history, temperature: 0.3, max_tokens: 512

Append assistant reply to history (assistant content = answer only, not the injected context block)

main(): readline REPL; banner “ShopEase Support Agent / ByteRover Memory + Groq (Llama 3.3 70B)”; exit quits; loop questions

Quality bar

Match behavior above closely (timeouts, models, prompts, file layout)

No secrets in repo; document npm install, .env, npm run curate once, then npm run chat

Code style: clear comments explaining ByteRover tiers / curation purpose where the original had them

Resources:

If this was useful, now you AI agent newver forgets topics and conversation and its smarter than before. Do like the post and share it with someone building AI agents. And drop a comment — I'd love to know what you're building.